I think all neuroscientists, all philosophers, all psychologists, and all psychiatrists should basically drop whatever they’re doing and learn Selen Atasoy’s “connectome-specific harmonic wave” (CSHW) framework. It’s going to be the backbone of how we understand the brain and mind in the future, and it’s basically where predictive coding was in 2011, or where blockchain was in 2009. Which is to say, it’s destined for great things and this is a really good time to get into it.

I described CSHW in my last post as:

Selen Atasoy’s Connectome-Specific Harmonic Waves (CSHW) is a new method for interpreting neuroimaging which (unlike conventional approaches) may plausibly measure things directly relevant to phenomenology. Essentially, it’s a method for combining fMRI/DTI/MRI to calculate a brain’s intrinsic ‘eigenvalues’, or the neural frequencies which naturally resonate in a given brain, as well as the way the brain is currently distributing energy (periodic neural activity) between these eigenvalues.

This post is going to talk a little more about how CSHW works, why it’s so powerful, and what sorts of things we could use it for.

CSHW: the basics

All periodic systems have natural modes— frequencies they ‘like’ to resonate at. A tuning fork is a very simple example of this: regardless of how it’s hit, most of the vibration energy quickly collapses to one frequency- the natural resonant frequency of the fork.

All musical instruments work on this principle; when you change the fingering on a trumpet or flute, you’re changing the natural resonances of the instrument. In the video below you can sort of ‘see’ this resonance:

Here we see time-averaged standing waves (resonance) on the front plate of a guitar:

And here are some of the elegant mathematical relationships between the notes a guitar string is made to resonate at (assuming a ‘just temperament’ tuning):

CSHW’s big insight is that brains have these natural resonances too, although they differ slightly from brain to brain. And instead of some external musician choosing which notes (natural resonances) to play, the brain sort of ‘tunes itself,’ based on internal dynamics, external stimuli, and context.

The beauty of CSHW is that it’s a quantitative model, not just loose metaphor: neural activation and inhibition travel as an oscillating wave with a characteristic wave propagation pattern, which we can reasonably estimate, and the substrate in which they propagate is the the brain’s connectome (map of neural connections), which we can also reasonably estimate.

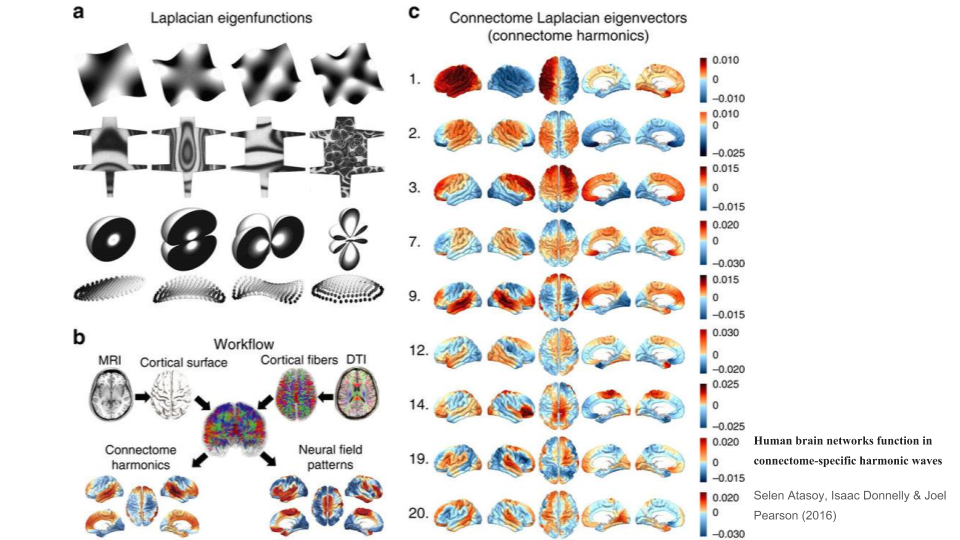

This means we can calculate (fairly) precisely which frequencies will be naturally resonant in a given brain. And Atasoy has done just that, as this fancy graphic shows:

Why is a harmonic analysis of the brain so powerful?

In my last post I mentioned that:

[I]t seems a priori plausible that systems like brain with significant periodicity will self-organize around their eigenvalues; i.e. these eigenvalues will be functionally significant and ‘costless’ Schelling points. This implies that these harmonics will be a good place to start if we want to efficiently compress a lot of the brain’s (and mind’s) complexity.

I would add that harmonic analysis of the brain is particularly powerful because harmonics follow highly elegant mathematical rules, and insofar as the brain self-organizes around them, the rest of the brain will have a hidden elegance, a hidden simplicity, to it as well.

The problem facing neuroscience in 2018 is that we have a lot of experimental knowledge about how neurons work– and we have a lot of observational knowledge about how people behave– but we have few elegant compressions for how to connect the two. CSHW promises to do just that, to be a bridge from bottom-up neural dynamics – things we can measure – to high-level psychological/phenomenological/psychiatric phenomena – things we care about. And a bottom-up bridge like this should also allow continuous improvement as our understanding of the fundamentals improve, as well as significant unification across disciplines: instead of psychology, psychiatry, philosophy, and so on each having their own (slightly incompatible) ontologies, a true bottom-up approach can unify these different ways of knowing and serve as a common platform, a lingua franca for high-level brain dynamics.

(Also on the topic of building theoretical bridges, see my recent thoughts on how we could approach unifying CSHW with other paradigms like IIT & FEP.)

What types of new things could we do with CSHW?

CSHW is still a very young paradigm, and so far research has reasonably focused on the foundations: how it works and straightforward applications. Atasoy & coauthors have looked at how the natural power distribution between harmonics, how this changes between resting state brain activity vs psychedelic (LSD) brain activity, and how this pushes the brain towards self-organized criticality. All novel and important results. But if CSHW is as promising as it seems to me, this initial frame dramatically undersells the potential of the paradigm.

The following is a compilation of about a year’s worth of thoughts about what could be done with CSHW. It assumes a reasonable conceptual familiarity with the CSHW paradigm; for more background, see this video and these papers.

I. Proxy phenomenological structure.

The Holy Grail of various brain sciences (and a topic of great interest to QRI) is a clear, intuitive method for connecting what’s happening in the brain to what’s happening in the mind. CSHW is interesting here since the brain likely self-organizes around its characteristic frequencies, so changes in these frequencies (and the distribution of power between them) should ripple through phenomenology, likely in predictable ways. Insofar as this ‘harmonic scaffolding’ hypothesis is true, CSHW could form the seed to a formal science of phenomenology.

To me, the most obvious starting point is QRI’s Symmetry Theory of Valence (STV), that consonance between the natural harmonics of a brain will be a good proxy for that experience’s overall degree of pleasantness (Johnson 2016; extended by Gomez Emilsson 2017). Atasoy herself is interested in explaining psychedelic effects as an increase in criticality and as shifts in the power distribution between harmonics (Atasoy et al. 2016; 2017). A natural fusion of these approaches is to parametrize the effects (and ‘phenomenological texture’) of all psychoactive drugs in terms of their effects on the consonance, dissonance, and noise of a brain, both in overall terms and within different frequency bands (Gomez Emilsson 2017).

In the long term, we’ll want to move upstream and predict connectome-specific effects of drugs- treating psychoactive substances as operators on neuroacoustic properties, which produce region-by-region changes in how waves propagate in the brain (and thus different people will respond differently to a drug, because these sorts of changes will generate different types of results across different connectomes). Essentially, this would involve evaluating how various drugs change the internal parameters of the CSHW model, instead of just the outputs. Moving upstream like this might be necessary to predict why e.g. some people respond well to a given SSRI, while others don’t (nobody has a clue how this works right now).

II. Understand and improve mental health

The most important future theme in psychiatry is “What you can measure, you can manage.” If connectome harmonics are as tightly coupled with phenomenology as we think, then we may be able to identify a target emotional state for someone, identify what about their connectome harmonics is different from that state, and use this to inform us how to push the system toward that state.

I’m increasingly suspecting that many psychiatric illnesses will leave a semi-unique fingerprint on someone’s connectome harmonics. Furthermore, connectome harmonics may be sufficiently coupled to the causal structure of these illnesses that adjusting the system to eliminate this fingerprint may actually cure the illness. It would be difficult for me to overstate how important I think this is.

In Quantifying Bliss, my colleague Andrés discusses using CSHW to parametrize and measure mental health (with real-time debugging):

The “clinical phenomenologist” of the year 2050 might look into your brain harmonics, and try to find the shortest paths to nearby state-spaces with less chronic dissonance, fishing for high-consonance attractors with large basins to shoot for. The qualia expert would go on to provide you various options that may improve all sorts of metrics, including valence, the most important of them all. If you ask, your phenomenologist can give you trials for fully reversible treatments. You sample them in your own time, of course, and test them for a day or two before deciding whether to use these moods for longer.

Likewise, if we build a suite of methods for parametrizing and replicating phenomenological states with CSHW, we could presumably use this to replicate the psychoactive (and psychedelic) effects of various drugs, without the drugs and without their broad-spectrum side-effects. Opioid painkillers without the chemical addictiveness; MDMA without the neurotoxicity. And new drug effects that current pharmaceuticals are unable to create. I call this class of interventions “patternceuticals” in Principia Qualia.

Finally, it’s not unreasonable to think that we may be able to use CSHW to better understand limited aspects of someone’s physical health, as well. For example, inflammation looks like it can cause depression, but perhaps it goes the other way too: depression causing inflammation, in a very precise and mechanistic way. If we’re right that harmony is the natural homeostatic state of our brain, then persistent dissonance would indicate a threat or injury, something to mobilize resources against and fight, and the dissonance itself may be sufficient to kickstart this defensive process. In this case we should expect to find mechanisms which activate both global hormones like cortisol, and local defense mechanisms like nearby microglia releasing cytokines, in response to irregular (dissonant) neural firings, perhaps with high-energy beat patterns as the specific trigger. The core intuition here is that connectome harmonics will have consequences at all levels of biology, and some of these consequences can be predicted a priori.

III. New science of psychometrics & psychodynamics

For over a hundred years now, we’ve been trying to figure out how to systematize the structure of variation between peoples’ minds and brains. This has led to a slew of psychometric frameworks, the two most robust being IQ and the Big 5 personality dimensions (Openness, Conscientiousness, Extroversion, Agreeableness, Neuroticism). Both are top-down frameworks built on observational data and factor analysis.

But with CSHW, if we’re able to more elegantly model the ‘neurological natural kinds’ which generate our cognitive, affective, and social, and phenomenological dynamics in bottom-up ways, we should be able to improve on these metrics, and generate new metrics for interesting dimensions of variation that have eluded formal measurement so far.

From IQ to NaQ

As an opening move, I’d suggest that we could reconceptualize intelligence as NaQ (neuroacoustic quotient), or ‘the capacity to cleanly switch between different complex neuroacoustic profiles.’ This would envision the brain as analogous to a musical instrument that can (nigh-instantly and perfectly) retune itself from one complex key signature (set of connectome harmonics) to another, where the key signature corresponds to the parameters of some problem domain and harmony in this key signature corresponds to solving the task at hand.

Higher intelligence would encompass quicker & cleaner transitions, more complex neuroacoustic ‘key signatures,’ more flexibility and adaptability in configuration, greater numbers of subpartitions (orthogonal sets of connectome harmonics) within the brain and lower amounts of leakage between these subpartitions, and better harmony engineering (making the computation ‘flow’ in the right direction). Some of these capacities will be improved by practice and life experience (‘crystallized intelligence’) and some won’t (‘fluid intelligence’).

Steve Lehar has speculated that this sort of harmony-based computation could be implemented by modeling perceptions as spatio-temporal constraints on frequencies which in turn constrain the possible resonances of the system, much like how putting a clamp on a chladni plate constraints its resonances. There’s much more that could be said here about merging Lehar’s intuition with Atasoy’s CSHW, but we need not speculate too much about implementation to say interesting things about psychometrics.

I see two research angles here: we could start with the current testing methodology for IQ, and try to reverse-engineer what neuroacoustic properties each subtest is effectively testing for (Verbal Comprehension, Perceptual Reasoning, Working Memory, Processing Speed). This seems easiest. We could also start from the literature on CSHW and self-organizing (harmonic) systems, identify core principles (e.g., conditional metastability, integrated information, symmetry/harmony as success condition, orthogonalization of harmonics) that seem relevant for intelligence, and try to remake NaQ from the bottom up. This seems better long-term.

What’s the point? I suspect that understanding the algorithmic implementation of intelligence could help us better see what IQ tests are actually measuring, how to improve them such that they reflect the contours of reality more closely, and perhaps eventually how to improve (or how to prevent modern life from degrading!) various subtypes of intelligence. The value of even incremental advances here could be large.

An interesting variable is how much external noise is optimal for peak processing. Some, like Kafka, insisted that “I need solitude for my writing; not ‘like a hermit’ – that wouldn’t be enough – but like a dead man.” Others, like von Neumann, insisted on noisy settings: von Neumann would usually work with the TV on in the background, and when his wife moved his office to a secluded room on the third floor, he reportedly stormed downstairs and demanded “What are you trying to do, keep me away from what’s going on?” Apparently, some brains can function with (and even require!) high amounts of sensory entropy, whereas others need essentially zero. One might look for different metastable thresholds and/or convergent cybernetic targets in this case.

A nice definition of psychological willpower falls out of this paradigm as well: the depletable capacity to adjust harmonics, perhaps against a gradient of increasing Free Energy.

From emotional intelligence to EnQ & MQ

EQ (emotional intelligent quotient) isn’t very good as a formal psychological construct- it’s not particularly predictive, nor very robust when viewed from different perspectives. But there’s clearly something there– empirically, we see that some people are more ‘tuned in’ to the emotional & interpersonal realm, more skilled at feeling the energy of the room, more adept at making others feel comfortable, better at inspiring people to belief and action. It would be nice to have some sort of metric here.

I suggest breaking EQ into entrainment quotient (EnQ) and metronome quotient (MQ). In short, entrainment quotient indicates how easily you can reach entrainment with another person. And by “reach entrainment”, I mean how rapidly and deeply your connectome harmonic dynamics can fall into alignment with another’s. Metronome quotient, on the other hand, indicates how strongly you can create, maintain, and project an emotional frame. In other words, how robustly can you signal your internal connectome harmonic state, and how effectively can you cause others to be entrained to it. Empirically, women seem to have a higher EnQ (and are generally more sensitive to the energy in a room), whereas MQ might be more similar on average, with men being slightly higher (especially on the tails). Most likely, these are reasonably positively correlated; in particular, I suspect having a high MQ requires a reasonably decent EnQ. And importantly, we can likely find good ways to evaluate these with CSHW.

The neuroscientific basis for the Big 5

The Big 5 personality framework (Openness, Conscientiousness, Extroversion, Agreeableness, Neuroticism) is one of the crown jewels of psychology; it’s robust, predictive, and intuitive. But it’s a top-down construct born of factor analysis, not fundamentally grounded in neuroscience.

I don’t think the Big 5 fits cleanly under any one neuroscientific paradigm. Instead, we can take a grab-bag approach. First, there’s an interesting paper that attempts to describing each dimension in terms of cybernetic control theory, where the brain tries to keep certain internal proxies within some range. I think this is a reasonable explanation for some dimensions but not others. There’s also interesting work from affective neuroscience where the Big 5 are generated by different combinations of ‘axiomatic’ primal emotional drives.

But if I had to spitball it from the perspective of CSHW, I’d suggest the following:

- Openness: degree of canalization of the brain’s initial harmonic key signature.

- Conscientiousness: strength of internal drive toward consistency across connectome harmonic partitions.

- Extroversion: generally formulated as reward sensitivity; perhaps we could also model it as ease of switching between high-entrainment & high-metronome modes in social interactions; alternatively, whether interaction with others adds or drains Free Energy.

- Agreeableness: multifactorial; part of it seems to just be EnQ. I suspect this might be the least-robust factor of the Big 5.

- Neuroticism: degree to which one’s harmonic key signature is biased toward dissonance (and specifically the degree of speculative generation of high-frequency dissonance in world models).

Autism might be reconceptualized along two dimensions: first, most forms of autism would entail less general ability to reach interpersonal entrainment with another’s connectome harmonics- a lower EnQ. Second, most forms of autism would also entail a non-standard set connectome harmonics. I.e., the underlying substructure of core harmonic frequencies may be different in people on the autism spectrum, and thus they can’t effectively reach social entrainment with ‘normal’ people, but some can with other systems (e.g. video games, specific genres of music), and some can with others whose substructure is non-standard in the same way. We can think of this as a game-theoretic strategy: groups which have some ‘harmonic diversity’ might find some forms of emotional and semantic synchronization more difficult, but this would preserve cognitive, behavioral, and social diversity, and produce more net comparative advantage.

The mathematics of signal propagation and the nature of emotions

High frequency harmonics will tend to stop at the boundaries of brain regions, and thus will be used more for fine-grained and very local information processing; low frequency harmonics will tend to travel longer distances, much as low frequency sounds travel better through walls. This paints a possible, and I think useful, picture of what emotions fundamentally are: semi-discrete conditional bundles of low(ish) frequency brain harmonics that essentially act as Bayesian priors for our limbic system. Change the harmonics, change the priors and thus the behavior. Panksepp’s seven core drives (play, panic/grief, fear, rage, seeking, lust, care) might be a decent first-pass approximation for the attractors in this system. (Speculatively, we could also add sleep as an eighth fundamental drive under this emotions-as-bundles-of-brain-harmonics-with-clear-attractors definition; likewise we might look to a harmonic analysis to explain why some people are completely missing certain emotions.)

On harmonic canalization and emotional key signatures

The core phenomena which self-organizing harmonic systems leverage to self-organize is the ratio between their internal frequencies. As such, initial conditions matter, and in particular since low-frequency harmonics tend to entrain and synchronize high-frequency activity, the specific frequencies of ‘base tone’ (emotional) harmonics will determine much about which higher-frequency harmonics are consonant vs dissonant, and may highly constrain the possibility space of a given brain’s harmony gradient. This calls to mind my favorite passage from Kahlil Gibran: “We choose our joys and sorrows long before we experience them.” Perhaps it is not so much us doing the choosing, as the emotional key signature generated by the coldly-beautiful mathematics of our base harmonics.

How much variation in this is there across brains, how much is nature vs nurture, in what practical ways does this influence one’s emotional dynamics and emotional attractors, how could one characterize a typology and build a metric for this- these are all unknown and will be interesting to explore.

On aesthetics

I tend to believe a person’s aesthetic– what they find beautiful, and what they find ugly– is the closest thing to a carrier of personal identity we have. I am not my physical body; I am not my memories; I’m not even my brain. But as a lossy approximation, I would be okay with saying I am my aesthetic taste.

The promise of CSHW is we might be able to characterize this, perhaps as connectome-specific harmony dynamics (the ’emotional key signature’ I mention above). If we could in fact measure this, it would open up a world of applications: more inclusive personality metrics; connectome harmonic based methods for evaluating romantic compatibility; ‘backing up’ peoples emotional patterns and aesthetics in the case of stroke or degenerative disease; even modeling how various pieces of neurotechnology which could inject information into the brain (looking at you, Kernel and Neuralink) could be implemented without changing personality.

IV. Model interpersonal dynamics

How should we understand the neuroscience of human sociality and interaction? There’s an enormous amount of qualitative commentary on this, and some toy models involving things like mirror neurons, oxytocin, and such, but by and large this question has been very resistant to quantification. If, however, CSHW does describe the ‘deep contours’ of brain dynamics, brain harmonics will likely covary with social dynamics and could be a good foundation for building quantitative models.

The lowest-hanging fruit might be this: interpersonal compatibility seems to be about harmony in some deep respect. Maybe we could simply add two peoples’ connectome harmonics together and evaluate the result for consonance vs dissonance. This probably wouldn’t give a great result– a lot of interpersonal harmony seems to be about subtle dynamics, not resting-state activity. But it’d be worth a try.

I mentioned in my last post that:

I suspect the way our brains naturally model other brains is through modeling their connectome harmonics! That in studying CSHW we’re tapping into the same shortcuts for understanding other minds, the same compression schemas, that evolution has been using for hundreds of millions of years. This is a big claim, to be developed later.

I’m still bullish on this; when we model other peoples’ emotions, I think we’re simulating their connectome harmonics. (If our brains aren’t doing this, it would be like evolution leaving $100 bills on the sidewalk, which it rarely does.) Humans have white eyes so other humans can see where they’re looking; honest signals like this facilitate trust and social coordination. It seems plausible that this is true in the CSHW frame too, that the information peoples’ bodies naturally telegraph- their facial expressions, their vocal tone, their body language- could be fairly high-fidelity proxies for important aspects of their internal harmonics. This might also suggest that during conspecific rivalry– fights with other humans– emotions such as anger or jealousy would act to decorrelate our bodily ‘tells’ and our harmonics, in both large and subtle ways.

But more generally, I’d like to pose the question: what happens when two sets of connectome harmonics dance? When two people talk, or dance, or laugh, or debate, or fight, or make love, we can think of it as their connectome harmonics interacting — so how should we understand the typology of possible interactions, and the internal syntax of each interaction?

First, I suspect (with a nod to Section III above) we should consider humans as doing a complex mix of signal emission, signal entrainment, and signal filtering, and in a significant way, these signals ‘cash out’ in terms of their effects on connectome harmonics. Signals which affect high frequency harmonics mostly act as perceptual constraints on cognitive processing; signals which affect low frequency harmonics mostly act as drivers on emotional state.

Anecdotes: We can see the purpose of smalltalk here: it’s nominally the exchange of logical information, but the actual result is to drive two harmonic systems into entrainment with each other. At a recent party, I noticed two very distinct strategies people were employing to raise the mood of those around them: one friend, S, obviously was in a great mood and through loud talk and exaggerated gestures, was blasting out his mind music for the people around him to enjoy– an emotional metronome. Another friend, A, was listening very closely to the thoughts and feelings of the person he was talking to, and then reflecting back a more-harmonious version of that same pattern, cleaned of dissonance and noise– a dissonance filter. Adversarial interactions can also exist; driving someone into a state of internal dissonance is simply a matter of reversing these processes. Finally, the trick to socially navigating Depression seems to be to avoid being an emotional metronome of bad feeling to those around you.

Second, as to the technical syntax and mechanics of psychosocial interactions, I suspect we should look at them through the lens of signal interactions within periodic systems. This would imply understanding interactions between connectome harmonics in terms of different types of attempted causal coupling, which could plausibly lead to a reasonably bounded typology of interactions. (There is more to say here, but it might be an information hazard and I suspect it’s better discussed in person.)

We might expect that some future social psychologist could use this CSHW frame to map out and characterize interactions at a social gathering: e.g., “That gentleman is chatting up that lady, trying to pull her into entrainment with his harmonics, and it looks like he’s succeeding; meanwhile over there, there’s a couple that’s psyching themselves up to go talk to that famous person over there, probably trying to ‘presynchronize’ with his harmonics, and over there …”

At any rate, if CSHW can be used to build a good quantitative model of human-human interactions, it might also be possible to replicate these dynamics in human-computer interactions. This could take a weak form, such as building computer systems with a similar-enough interactional syntax to humans that some people could reach entrainment with it; affective computing done right. It might also take a much stronger form: if we can cleanly map the actual behavioral state space and dynamics of an AI system to human-like connectome harmonic dynamics, we would have something intuitively predictable, in the same way that humans find other humans intuitively predictable– and perhaps even trustworthy, in the same way. Who knows– perhaps this could even lead to alignable AI.

V. New theory of language & meaning

Philosophers and linguists tend to understand the world in terms of map and the territory; words and objects; language games and reality (although the distinction is not always clear). Philosophers like Hoffstadter & Dennett (and arguably Friston) broaden this somewhat with attempting to ‘naturalize’ meaning and intentionality through mechanistic processes, describing how the territory might generate the map.

What is often missed is that language is a felt-sense phenomenon. When I say something to you, I am operating at the semantic level, but I’m also operating at the visceral level. My words are essentially reaching out and ‘plucking’ certain metaphorical strings of feeling. In a very real sense, my intention is to convey that “if you combine this feeling, with that feeling, you get such and such feeling” and success results in you realizing “oh yeah, when I combine those two feelings I do get such and such feeling!”

Different languages (and individuals) will have subtly different ways to do this, and may produce subtly different results; but there’s a lot of pressure for convergence too, both across individuals and across linguistic clusters. When a German says haus and an American says house, I think it’s pretty safe to say a very similar felt-sense is being channeled in each. (At least, this is very true for low-level phenomena everyone experiences regularly in their daily lives. For higher abstractions, not always.)

At any rate, instead of this:

I think we should be talking about this:

The ontology of words is clear. You’re reading them now. Philosophers squabble over the ontology of objects, but generally physics and folk psychology seem to be doing a good job dealing with things. But the ontology of the felt-senses attached to given word+object pairs is a lot less clear.

In the long run, perhaps a formal theory of qualia like IIT could offer something like objective data here, by generating a mathematical qualia-object that represents the characteristic ‘shape’ which arises in people when they say or hear a word. (Caveat: we might get better data with phrases, instead of single words.) But in the meantime, it might be possible to say some things about this with the CSHW framework: we might think of words as pointers to, or operators on, specific bundles of connectome harmonics; language is thus a network of such pointers&operators. Under this framing, I think we’d be able to explain a lot of things about a few words, and a few interesting things about most words.

Granted, it would be really hard to get good data here, and practically speaking there are better uses of research time. I think the real value is looking at what this might imply about the metaphysics of communication. E.g., it would strongly imply language as both a driver of, and dependent upon, the standardization of connectome harmonics, and might suggest some interesting things about how languages naturally lead to different ’emotional timbres’. (See Section III for comments on how this might interact with the autism spectrum.)

The theme I find most interesting here is ontology as a system of relations between felt senses, and metaphysics as the relation between this network of felt-senses and the world. Shakespeare’s Hamlet famously admonished, ”There are more things in heaven and earth, Horatio, than are dreamt of in your philosophy.” If Horatio has fewer felt-senses than there are things in the world, then his ontology will behave as a too-small blanket, able to cover any one piece of reality but unable to cover it all at the same time, and he’ll make all sorts of type errors. Perhaps we could reinterpret Wittgenstein’s Tractatus as essentially saying, ‘we should aim to have as many words as there are felt-senses, and as many felt-senses as there are things in the world, and all forms of confusion come from mismappings between these domains.’ Or maybe not; Wittgenstein is hard to pin down.

At any rate, there’s plenty of threads we could pull here: we could try to understand the ‘characteristic connectome harmonic typology’ of different languages; we could use the word+object+felt-sense model to try to improve brain-computer interfaces; we could try to enrich communication by sonifying connectome harmonics, allowing an alternative intuitive appreciation for mind states.

Finally, I think we could get deep insight into the nature of the culture war: we might productively model politics, and all ideological conflict, as a vicious battle over the valence of these ‘felt senses’ and the logic of how they combine. Competing memeplexes have deep incompatibilities between their internal ‘felt-sense arithmetic’, which effectively constitute their boundaries and axis of conflict. Ontological holy war is about degrading the other side’s ability to think complex and/or adversarial thoughts, by desecrating and warping the meanings of their words, fragmenting the ‘key signature’ of their connectome harmonics. Brutal stuff.

Unknowns:

Since CSHW is a young paradigm (2016!), there’s a lot we don’t know about it. Some important question marks are:

- How invariant are these harmonics? How much do they vary within people depending on activity, mood, age, etc? And how much do they vary between people? How stable are they if we measure a brain two times in a row?

- There seems to be two ways the brain could change its harmonics: it could shift energy between static harmonics, or it could shift the actual frequencies of its harmonics. What’s the balance between these two strategies, and what are the rules of thumb for when it does the former vs the latter?

- Can we proxy Atasoy’s fMRI+DTI+MRI method with much cheaper (and more temporally accurate) EEG devices? What about if we get a ‘baseline’ reading once, with Atasoy’s full method, then use that to build an EEG proxy? Would we have to do this on a per-connectome basis, or could we fudge things with a good machine learning model? (The ‘wavenumber problem’ may be relevant here…)

- Atasoy’s method essentially outputs a time-averaged snapshot of someone’s connectome harmonics. How do we measure dynamics? Is a real-time derivation of someone’s connectome harmonics possible, given current theory?

- How can we improve the internal model parameters of CSHW? Can we borrow from the predictive coding / FEP paradigms to improve CSHW’s model of wave propagation in different regions of the brain?

The Big Picture: investing in humans

Bryan Johnson (Kernel / OSfund) has A Plan For Humanity. (Don’t be alarmed, it’s pretty benevolent.) There are a lot of subtle details, but the core theme is that it’s safer for humanity to invest in the ‘human codebase’ and human-improvement ecosystem, rather than the AI codebase and ecosystem. I think this is both reasonable and important, and I endorse what he argues. But to intelligently and presciently invest in the human codebase & human-improvement ecosystem, you need a Paradigm. Maybe many paradigms, but at least one capital-P Paradigm. I think CSHW could be that.

Thanks to Andrés Gomez Emilsson, Sarah McManus, Adam Safron, and Romeo Stevens for comments on previous drafts of this work.

Wow! This is AWESOME! Just as I’ve been thinking for a while. The brain is NOT like a digital computer, it is more like a MUSICAL INSTRUMENT, with inputs and outputs, and a harmonious melodious principle of operation that co-mingles with the human soul, as if the soul was streaming energetically straight from the player’s soul, through the instrument, out into the world.

Music IS the language of the brain! Is there a “motor signal” hidden in a musical sound? Just play a deep jazz beat and watch that “motor signal” motivate a dance-floor of dancers, all swaying in synchrony to that same signal. Is there a “visual signal” hidden in musical sound? Look at the art and ornament of the different eras of art, and how they correlate with the same eras in music. Baroque music is Baroque art, played out in time. The redundant trills and scroll are manifest in each.

This is the future of neuroscience! And it merges with art!

Fantastic! We are very big fans of your work, Steven :) It’s a shame so few people have picked it up and built on top of your projective and harmonic resonance frameworks. But we at the Qualia Research Institute think that it is about time for it to come up to the surface, and that the connectome-specific harmonic wave empirical paradigm is a great candidate for the breakthrough to happen.

Do you have any thoughts about our work on quantifying valence?(also happy to chat off-line about it).

Just discovered your site, thanks to an email tip-off. Awesome stuff!